The first week with a new AI image generator always feels like a win. You throw in a prompt, and the results look better than anything you could have mocked up yourself. Then week two hits. You need to batch twenty variations for a campaign, the tool starts queuing slowly, the edits don’t stick, and the credit counter ticks up faster than you expected. That fade from excitement to frustration is exactly why I started testing tools differently—not on demo samples, but on four specific criteria that actually predict whether a tool will work under real deadlines.

Why the Honeymoon with AI Generators Usually Ends After Week Two

The problem isn’t output quality. Most modern models can produce a stunning visual on the first try. The problem is everything that happens after that single image. You need to regenerate because the client wants a slightly different composition. You need to maintain a consistent style across a batch of ten assets. You need to know how many credits a round of failed prompts will cost before you start.

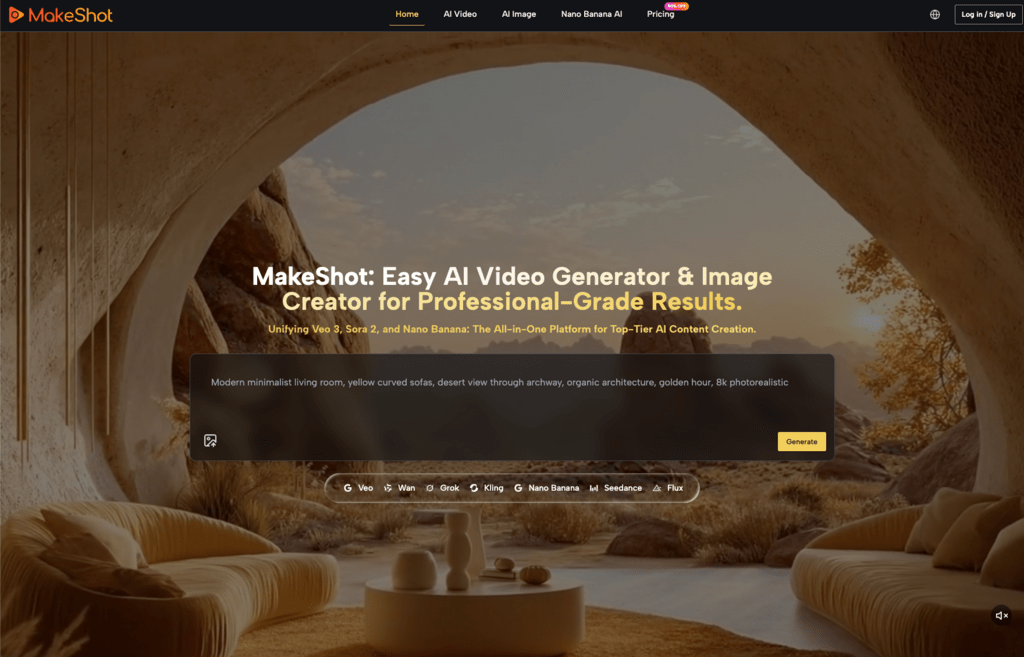

I used Nano Banana AI as a test case because its offering is deliberately focused—text-to-image, image-to-image, restyling, and editing in one workflow. That narrow scope made it easier to isolate the variables that matter most in daily use. After running a few rounds of real work through it, I settled on four criteria that I now apply to every tool I evaluate: generation latency, prompt control and adherence, output editability, and cost predictability.

These aren’t flashy metrics. They won’t appear on any demo reel. But they determine whether a tool becomes part of your pipeline or an expensive experiment you abandon after the free trial.

Latency and Control: The Two Things Demos Don’t Show You

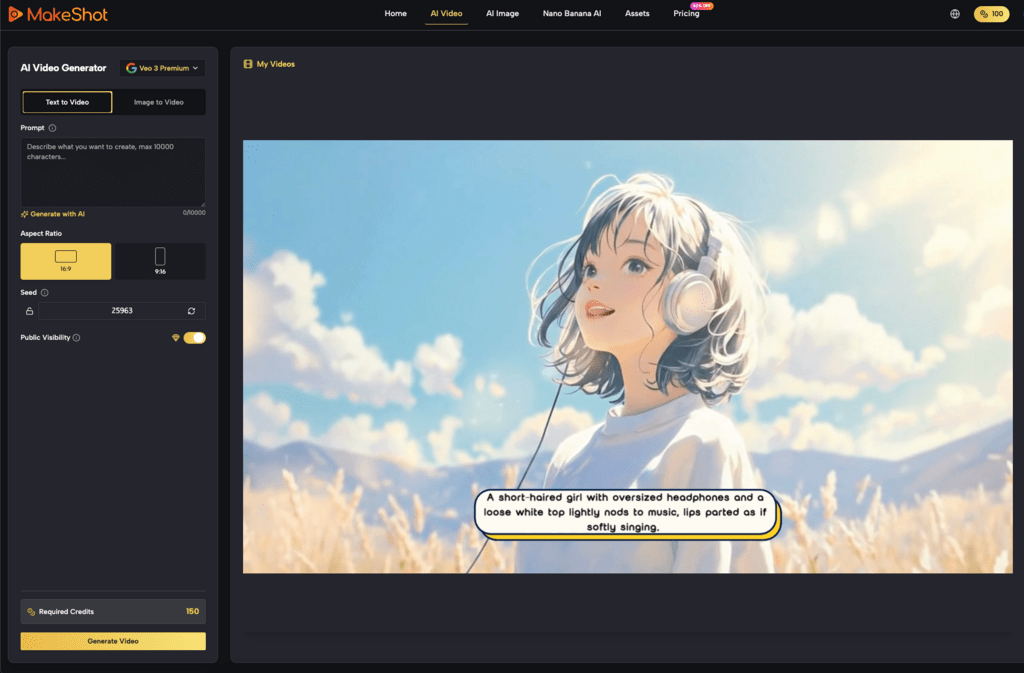

Latency is deceptive. A tool that returns a single image in three seconds can feel fast, but that number doesn’t tell you how it handles a queue of five concurrent generations, or what happens when you need to re-roll a batch because the first pass missed the mark. I ran the same moderately complex prompt through Nano Banana AI and a contrasting tool: “A mid-century modern living room with a mustard yellow sofa, natural light from a large window on the left, and a bookshelf with mostly blue and green books.”

The tool’s adherence was solid on spatial positioning—the sofa sat where I described it, the light came from the correct direction, and the color palette matched. But what stood out more was how it handled re-rolls. Instead of resetting the entire generation context, it kept the structural elements consistent while varying details like book arrangement and cushion texture. That matters when you’re iterating on a concept because it reduces the number of full regenerations you need to get a usable asset.

I should be clear about the limits of this test. I only evaluated image generation, not video. I cannot confidently say that these latency and control behaviors carry over to Banana AI workflows involving animation or frame-consistent motion. Video introduces entirely different bottlenecks—timing, keyframes, temporal coherence—that a single tool test doesn’t cover. If you’re evaluating an AI Video Generator, you’d need to run comparable tests on frame consistency and render queuing under load, which I haven’t done.

Editability and Cost Predictability: Where Many Tools Fall Apart

The real test of an image tool isn’t the first generation—it’s the fifth iteration after a client changes one element. Imagine you’re creating a batch of social ads. The headline image is approved, but the client wants the product moved to the right third of the frame and the background color shifted from teal to slate. In many tools, that means a full regeneration, which may or may not preserve the original style. Nano Banana AI’s in-image editing capability reduces that risk by allowing restyles and local edits without starting from scratch. That saved me multiple re-rolls on a single asset.

Cost predictability is where even good tools can fail silently. One tool might charge per generation regardless of resolution or complexity. Another might price by step count or output size. Neither is inherently better, but they behave very differently under different workloads. Here’s a scenario I ran with approximate numbers:

- Tool A: $0.05 per generation, 20 generations per asset set = $1.00 per set. If you need 10 iterations to get the right image, that’s $10.00.

- Tool B: $0.02 per generation but charges extra for high-resolution outputs and in-painting. Same 20 assets might cost $0.40 base, but add $0.50 per in-paint edit, and the total climbs fast.

The best tool on paper can be the worst for your wallet if you need many iterations or heavy editing. What matters is whether the pricing model matches your actual usage pattern—bursty and experimental, or steady and volume-driven.

One blind spot worth highlighting: no tool transparently shows failure costs. Every regeneration from a bad prompt, a misread instruction, or a hallucinated object still consumes credits. That cost is invisible until you review your usage log. I budget an extra 30% on top of my expected generation count to account for these re-rolls.

What I Still Can’t Recommend After Testing

This evaluation has clear boundaries. It does not cover video generation, multi-model pipelines, or enterprise-scale deployment. Those systems introduce different constraints: GPU scheduling for longer renders, asset management for large teams, and API rate limits that affect automated workflows. I haven’t tested any of those scenarios, so I won’t speculate.

I also want to be honest about use-case dependence. A marketer running a hundred variations of an ad campaign will prioritize speed and batch consistency above all else. An artist refining a single composition will prioritize editability and style control. Those are different tools, even if they use the same underlying model. Nano Banana AI sits in a practical middle ground—it handles both reasonably well—but that doesn’t make it the right choice for every workflow.

One question I cannot answer yet: whether tools that excel at still frames can also handle frame-consistent video styles reliably. The technical requirements are different, and I haven’t tested video generation through this lens. If you’re evaluating an AI Video Generator, you’ll need to build your own test suite focused on temporal consistency and motion coherence.

Run Your Own Three-Day Stress Test Before You Commit

Rather than end on conclusions, I’ll leave you with a repeatable test plan. It takes three days, costs minimal credits, and will tell you more about a tool than any set of benchmark images.

Day 1: Generate 20 images with varying prompt complexity. Start simple—”a red apple on a white table”—then escalate to multi-element prompts with spatial references and style constraints. Measure median time per generation and note how many outputs need re-rolls due to adherence failures.

Day 2: Attempt five image-to-image edits. Take one of your Day 1 outputs and try changing a background element, adjusting a color palette, or adding a subject. Track how many regenerations each edit requires and whether the style holds across iterations.

Day 3: Simulate a batch of 50 generations. This is the volume test. Measure total time, credit consumption, and how many assets you consider usable without further changes.

Take notes and compare at least two tools with different pricing models. Banana AI makes a clean test case because its credit structure is straightforward and its editing workflow is integrated, but the real value is in running the comparison yourself. The tool that looks best on day one may not survive day three—and that’s the one you actually need to know about.