The week before a product launch is rarely about high-level strategy; it is usually about friction. You are waiting on a designer to swap a background from a “home office” to a “corporate lobby.” You are waiting for a color correction on a hero image because the primary brand purple looks slightly off in the mobile mockup. These are not creative breakthroughs; they are administrative hurdles. In the traditional agency or in-house workflow, these minor adjustments often carry a two-week lead time. This is the “asset tax” that product teams pay for wanting to iterate on ad performance in real-time.

Decoupling your creative output from these design queues doesn’t mean firing your design team. It means shifting the bottleneck. By treating generative tools and AI-driven editors as an iterative scratchpad, product teams can ship high-fidelity ad variations hours after a hypothesis is formed, rather than weeks. The goal is to reach a state of launch velocity where the creative capacity of the team is limited only by their ability to test, not by the availability of a specialized designer’s calendar.

The High Cost of the Design Waiting Room

In many marketing departments, the creative process is a linear conveyor belt. A product manager writes a brief, a creative director reviews it, a designer executes, and a feedback loop begins. During a high-stakes launch, this linear path is a liability. If an early A/B test shows that users in the EMEA region bounce when they see North American-style interiors in an ad, the team should be able to swap that environment immediately.

Instead, the “Design Debt” accumulates. Small tweaks—lighting adjustments, object removal, or demographic shifts in lifestyle photography—clog the creative queue. This forces designers to spend 60% of their time on “production” work rather than “creative” work. For product teams, this results in stalled campaigns and missed opportunities to optimize during the crucial first 48 hours of a launch.

The sweet spot for AI integration is exactly here: the production layer. When product teams take ownership of asset refinement, they aren’t replacing the designer’s eye for brand soul; they are simply removing the manual labor of pixel manipulation. This allows the team to maintain professional standards without the overhead of a formal re-briefing for every minor variation.

Hypothesis Testing with Prompt-Based Prototyping

The first stage of bypassing the bottleneck is conceptualization. Traditionally, you might browse stock photo sites for hours to find a “close enough” match for a concept. With modern generative models, you can move from an abstract idea to a high-fidelity visual in seconds.

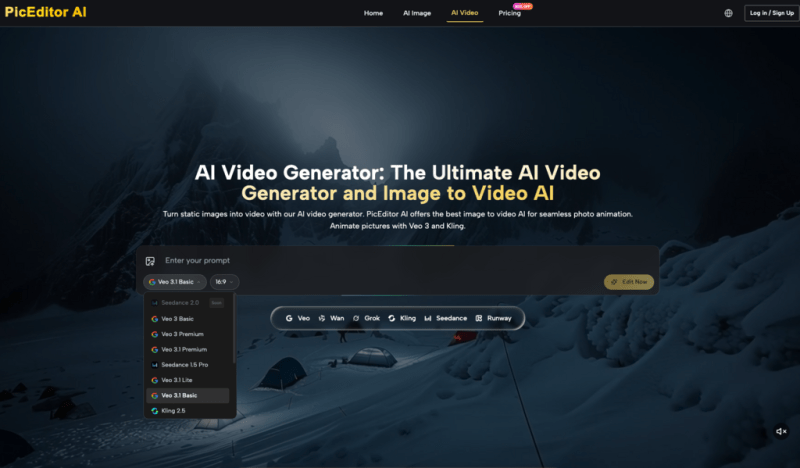

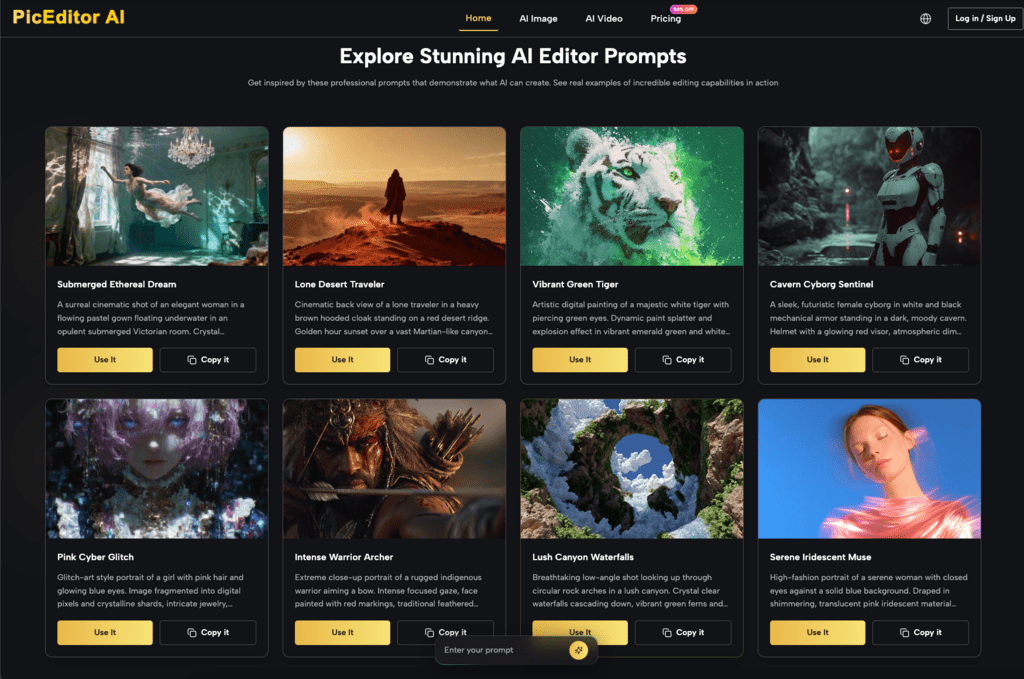

Using an AI Image Editor allows a team to visualize product placement in diverse environments before a single cent is spent on a physical photo shoot. For instance, if you are launching a new productivity tool, you can use models like Flux or Nano Banana to generate scenarios ranging from a minimalist cafe in Tokyo to a high-rise office in London. This isn’t just about getting a pretty picture; it’s about validating which visual environment resonates with your target audience’s aspirations.

However, there is a distinct risk of “prompt drift.” It is easy to fall into the trap of generating five hundred images and assuming that volume equals quality. In practice, generating a million images is often less effective than deeply refining one or two strong concepts. High-volume generation without a specific hypothesis usually leads to a “uncanny valley” of assets that feel disconnected from the brand’s core identity. At this stage, the AI should be used as a low-cost prototyping tool to decide which “look and feel” will move to the next stage of production.

Precision Refinement: When Generation Isn’t Enough

One of the biggest mistakes product teams make is relying solely on text-to-image generation. For a brand, total regeneration is often the enemy of consistency. If you generate a new image every time you want a change, the product’s form factor—the specific curves of a bottle, the exact UI of an app, or the specific shade of a logo—will shift. This “hallucination” of brand assets is a quick way to lose consumer trust.

This is where the AI Image Editor becomes a surgical tool rather than a magic wand. Instead of asking the AI to “make a new version,” the focus shifts to modifying an existing, approved asset. We are currently in a transition period where the most effective ad creatives are “hybrid” assets—human-shot hero products placed into AI-generated or AI-enhanced environments.

By utilizing an AI Photo Editor, teams can perform surgical tasks that previously required advanced Photoshop skills. This includes object removal (taking out a distracting power cord in the background), face swapping to better represent local demographics in global campaigns, and background replacement that respects the lighting of the original subject. The “Good Enough” bar for social media ads is often lower than for a Super Bowl spot, but it must still feel intentional. Identifying when an AI-edited asset is ready for social proofing and when it requires a human designer’s final touch is a skill that product teams must develop through trial and error.

Operationalizing the Iteration Loop

To move fast, you need a repeatable workflow. You cannot treat every ad as a bespoke art project. A tactical workflow involves taking a single high-quality hero image and running it through a series of “swaps” to create a testing matrix.

For example, using the AI Photo Editor on PicEditor AI, a marketer can quickly swap color palettes from warm sunset tones to cool morning light. These variations are then fed into Meta or Google’s automated ad platforms to see which lighting profile yields a higher click-through rate. This level of granular testing was economically impossible two years ago.

Another critical step is upscaling and enhancement. AI-generated images often suffer from “soft” textures or low-resolution artifacts that scream “generated.” Using enhancement tools to sharpen edges and add realistic grain can make a $0 asset look like a $5,000 production. However, a moment of practical caution is necessary: we do not yet have absolute certainty on whether platform-specific quality filters (like those used by Google Ads) will eventually penalize assets with specific AI signatures. For now, manual review remains the only way to ensure an image doesn’t contain “melted” fingers or illogical shadows that could trigger a low-quality score in an ad auction.

The Limits of Autonomy: Where Strategy Trumps Tools

While the technical barriers to asset creation have collapsed, the strategic barriers have actually become higher. Because anyone can now generate a high-quality image of a “woman drinking coffee in a modern kitchen,” the competitive landscape is quickly becoming saturated with a specific type of “AI sameness.” If every brand in your niche is using the same base models (like Flux or Seedream), your ads will begin to blend into a generic, glossy aesthetic that users have already learned to tune out.

AI cannot replace the brand’s “soul.” It cannot understand the subtle psychological triggers of your specific audience—the “inside jokes” of a subculture or the specific pain points of a niche industry. A creative director is still needed to provide the “why” behind the “what.” The AI Photo Editor is the brush, but the strategy is the hand.

There is also a significant amount of explicit uncertainty regarding the long-term impact of 100% AI-generated visual identities. While these tools are excellent for short-term ad performance and rapid testing, we don’t yet know how they affect long-term brand equity. Does a brand that never uses “real” photography eventually feel hollow to a consumer? We are in the middle of a massive live experiment. The smartest product teams are those who use AI to move fast and break things in the ad account, while keeping their core brand identity grounded in human-led creative direction. Use the tools to bypass the design queue for your launch, but don’t let the tools dictate the brand’s ultimate direction.