A large number of people have musical ideas but no practical path to develop them. They write lyrics in notes apps. They describe moods out loud. They imagine a soundtrack for a product launch, a video essay, a classroom project, or a gift for a friend. But when the moment comes to make that idea audible, they hit the same wall: they do not use a digital audio workstation confidently, they do not know arrangement terminology well enough, and they do not have a composer on call. That is why AI Music Generator matters in a very specific way. It is not only a music tool. It is an access tool.

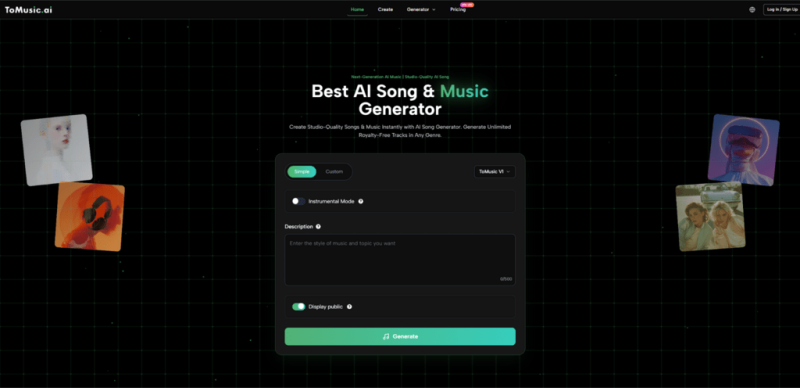

What makes that distinction important is that access changes who gets to participate. If the first layer of music creation is no longer engineering knowledge but descriptive language, then more people can move from intention to output. On the official ToMusic pages, that logic is visible everywhere. The product lets users choose Simple or Custom mode, toggle instrumental generation, add styles, enter lyrics, and select among four models. The system is structured around inputs that non-producers can still understand. In my view, that changes the meaning of creative entry.

Why So Many People Stop Before The First Draft

The traditional barriers to music creation are not always artistic. Often they are procedural.

A person may know the emotional direction of a song but not how to build chord progressions. They may know the hook but not how to produce a demo. They may know the soundtrack a scene needs but have no efficient way to find or make it. These are not small issues. They are the reasons many ideas never become shareable.

The gap becomes even larger for people whose relationship to music is primarily expressive rather than technical. Writers think in lines. Filmmakers think in scenes. Teachers think in memory cues and tone. Brand teams think in identity and feeling. None of those starting points are wrong. They are simply not the language of a conventional studio workflow.

ToMusic seems built to meet that reality halfway. The official FAQ says the platform analyzes prompts or lyrics, identifies elements like genre, mood, tempo, and instrumentation, and generates music accordingly. That means users can start from concepts they already know how to describe.

How The Platform Lowers The First Barrier

The most important thing a tool like this can do is lower the cost of trying.

It Lets Users Begin With Familiar Language

If a user can describe a piece as “soft piano with reflective vocals,” “upbeat pop with bright energy,” or “slow cinematic instrumental for emotional narration,” they already have enough to start. That is a big change from tools that assume prior fluency in production software.

It Separates Exploration From Precision

Simple mode and Custom mode are useful precisely because they recognize two different stages of confidence. Sometimes users want to explore a feeling. Other times they want to direct a track more explicitly with styles, lyrics, and other details. The platform does not force those stages into one interface behavior.

It Treats Instrumental Music As A Legitimate Need

A lot of non-producers do not actually want a full song with vocals. They want usable background music, scoring, or a mood-supporting piece. The instrumental option makes that possible without forcing every user into the same output category.

Why The Multi Model Structure Helps New Users

The official FAQ outlines four models, each with a different focus. V4 emphasizes vocals and creative control. V3 highlights richer harmonies and more innovative patterns. V2 is associated with longer compositions and tonal depth. V1 is the more balanced, streamlined option.

For advanced producers, that may sound like technical positioning. For newer users, it can be even more valuable because it turns abstract complexity into understandable choices.

| User need | Model direction | Why it helps non-producers |

| I want to try ideas quickly | V1 | Easier to start without overthinking |

| I want a longer evolving piece | V2 | Useful when duration matters |

| I want a fuller arrangement feel | V3 | Helps when the track needs more density |

| I care most about expressive vocals | V4 | Better fit for lyric-centered projects |

This kind of structure is helpful because it gives beginners meaningful decision points without overwhelming them with low-level controls.

A Realistic Beginner Friendly Workflow

The official process is simple enough that a newcomer can follow it without needing a long setup phase.

Step One Start From The Clearest Part Of Your Idea

If you know the mood, start there. If you know the lyrics, start there. If you only know that the piece should be instrumental, begin with that. The point is not to solve everything first. The point is to choose the strongest starting clue.

Step Two Choose The Simplest Suitable Setup

Use Simple mode for broader exploration. Use Custom mode if you already have more defined material such as title, styles, or lyrics. Pick instrumental mode if vocals are not necessary.

Step Three Select A Model Based On The Main Goal

Do not chase an abstract best model. Choose the model that best matches what you care about most right now, whether that is speed, depth, arrangement, or vocal quality.

Step Four Generate Then Improve What You Learned

Listen to the output and revise based on what it teaches you. The official FAQ itself recommends adjusting prompts, trying a different model, refining lyrics, or adding more precise style tags.

Why This Changes The Experience For Specific Groups

The accessibility argument becomes stronger when tied to actual user types rather than broad claims.

Writers Gain A Path From Text To Sound

Writers often have lines, concepts, and choruses before they have any music. For them, the problem is not imagination. It is conversion. A lyrics-supporting platform shortens the gap between written expression and audible form.

Small Creators Gain Independence

A solo creator making YouTube videos, podcasts, or social clips may not have the budget or time to source custom music for every concept. A platform that can turn short descriptions into draft tracks gives them more autonomy.

Educators Gain A New Format For Communication

Not every classroom song needs studio-level complexity. Sometimes the value lies in making information more memorable or emotionally engaging. A tool that starts from text can support that kind of creation more directly.

Non Technical Teams Gain Shared Reference Points

When several people discuss tone verbally, confusion is easy. Once a version is generated, the conversation becomes more concrete. The team can say what works and what does not based on something they can hear.

Where Lyrics Expand Access Even Further

The lyric pathway is particularly important because words are often the most approachable form of musical authorship.

People who would never open a production suite may still write verses, poems, refrains, or hooks. They may feel deeply connected to what a song should say but unable to produce how it should sound. A platform that allows lyric input changes that equation.

This is where Lyrics to Music AI becomes more than a convenient phrase. It points to a creative doorway for users who are expressive but not technical. They can hear how their language sits in rhythm, where the emotional emphasis lands, and whether their lines feel singable. That feedback can improve both the song draft and the writing itself.

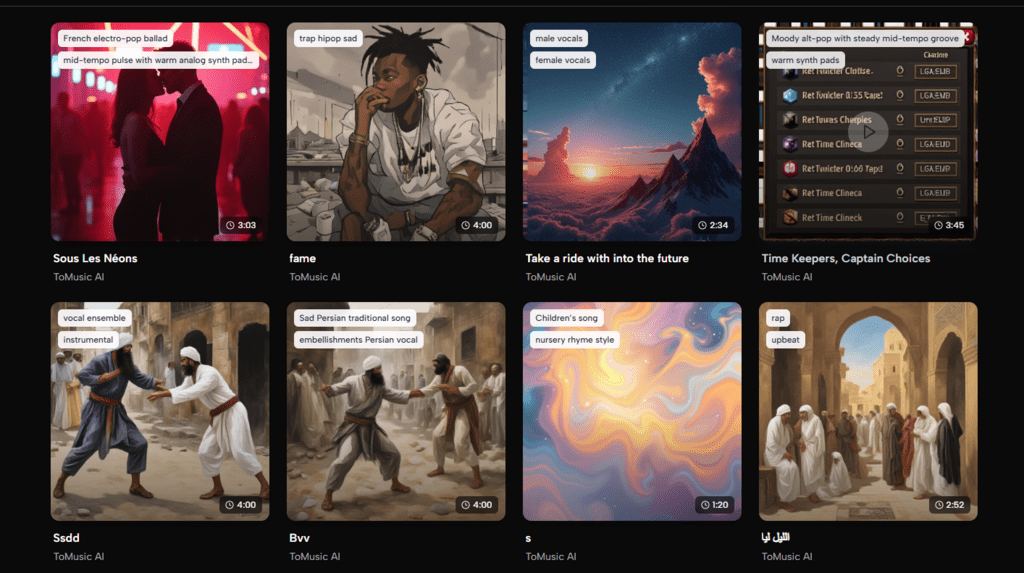

What The Official Use Cases Reveal

The site also points toward practical scenarios such as content creation, marketing, and other commercial applications. That is significant because it suggests the platform is not aimed only at hobby play. It is trying to support people who need music in real workflows.

At the same time, the product’s structure still feels approachable enough for casual use. That combination is valuable. A good accessibility tool should not feel childish to professionals or intimidating to newcomers. It should meet both groups at a usable level.

What The Licensing And File Options Add

The official materials mention commercial rights, royalty-free use, downloadable WAV and MP3 files, and certain higher-level functions such as stem extraction and vocal removal. These details matter because accessibility is not only about generation. It is also about what happens after generation.

If the output cannot be downloaded, reused, edited, or integrated into real projects, access stops too early. The fact that the platform frames the music as commercially usable and portable suggests a broader creative purpose. A beginner can make something and actually use it. That makes the learning process more meaningful.

Where A Grounded View Is Still Necessary

An accessible tool is not the same thing as an effortless one. A more honest reading helps users get better results.

Clear Input Still Matters

The platform lowers technical barriers, but it does not remove the need for intention. A vague request still tends to produce a less focused outcome.

Iteration Remains Necessary

New users sometimes assume accessibility means one perfect result immediately. The official guidance suggests otherwise. Regeneration, prompt refinement, lyric edits, and model switching are part of the normal process.

Human Taste Still Finishes The Work

Ease Of Entry Does Not Eliminate Creative Judgment

Even if the system can produce the sound quickly, someone still needs to decide what feels right, usable, or emotionally convincing. Accessibility opens the door. It does not decide the destination.

Why This Matters Beyond Convenience

The deeper value of ToMusic is not only that it saves time. It changes who can participate in early-stage music creation.

That matters because a great deal of creativity never fails on emotional grounds. It fails on procedural grounds. People cannot get from intention to first draft. They cannot hear the idea quickly enough to continue shaping it. They stop because the tools do not speak their language.

ToMusic appears to move closer to that language. It lets people begin with descriptions, lyrics, styles, and broad musical intentions. It offers an instrumental path for those who do not need vocals. It gives model choices that are understandable even without deep technical background. And it encourages revision rather than pretending the first result should solve everything.

For non-producers, that is not a small improvement. It is the difference between having an idea about music and having something they can actually hear, judge, share, and improve.